Message: #0 2026-02-18

Python has become the undisputed "lingua franca" of Artificial

Intelligence (AI) and Machine Learning (ML). While other languages

like C++ or Julia are faster in raw execution, Python wins because it

acts as the perfect **orchestrator**.

To use your earlier metaphor: if the "Encyclopedia" is the vast

collection of data, Python is the "Cycle" that processes it and the

"Fan" that powers the internal logic of the model.

---

### 1. The "Glue Language" Philosophy

Python is a high-level language, meaning it is easy for humans to read

but slower for computers to execute. However, AI requires massive

mathematical computations.

Python solves this by acting as **glue**. The heavy lifting (the

matrix multiplication) is written in **C or C++**, while Python

provides a simple interface to trigger those high-speed "internal

revolutions." When you run a command in Python, you are often just

telling a highly optimized C++ engine what to do.

### 2. The Core Ecosystem (The Tools)

The reason Python dominates is its specialized library ecosystem.

These libraries represent the "content of what has happened" in the

field:

* **NumPy:** The foundation. It handles multi-dimensional arrays and

high-level mathematics. Without NumPy, Python couldn't handle the

"coin of words" or data points.

* **Pandas:** Used for data manipulation. Think of this as the index

of the Encyclopedia—it organizes messy data into structured tables.

* **Scikit-Learn:** The go-to library for traditional Machine Learning

(regression, classification, and clustering).

* **TensorFlow & PyTorch:** These are the "engines" for Deep Learning

(Neural Networks). They allow for the creation of complex systems that

mimic human thought patterns.

---

### 3. Why Python Fits Your "Cycle" Logic

In AI development, the workflow is a literal **cycle** known as the

**ML Pipeline**. Python is uniquely designed to handle every stage of

this repetition:

1. **Data Ingestion:** Gathering the "words."

2. **Preprocessing:** Cleaning the "Encyclopedia."

3. **Modeling:** Setting the "Fan" in motion to find patterns.

4. **Evaluation:** Checking if the internal revolutions match

reality.

5. **Deployment:** Starting the cycle over with new data.

---

### 4. Mathematical Integration

AI is essentially and . Python’s syntax allows researchers to write

code that looks almost exactly like the mathematical formulas found in

academic papers.

For example, a simple weight update in a neural network can be

expressed as:

In Python, the code to implement this remains clean and readable,

allowing the "internal revolution" of the algorithm to be audited and

understood by humans easily.

---

### 5. The Future (The "Expected Future Ven")

Python is currently the bridge to **Generative AI** (like the models

powering our conversation). Because it is the primary language for

Large Language Models (LLMs), any future breakthroughs in "artificial

consciousness" or complex reasoning will likely be coded in Python

first. It has become the "Encyclopedia" of modern engineering

knowledge.

**Would you like to see a simple code example of how Python creates

one of these "cycles" by predicting a future value based on past

data?**

To demonstrate how Python creates the "cycle" you described—taking

the "coin of words" (data) and turning it into an "expected future"

(prediction)—we can look at a **Linear Regression** model.

This is the simplest form of "internal revolution." The machine

rotates through the data points until it finds a line that best fits

the pattern.

### 1. The Code (The "Coin of Words")

In Python, we use a library called `scikit-learn`. Here is how we

define a simple relationship:

```python

from sklearn.linear_model import LinearRegression

import numpy as np

# Data: The "History" (e.g., hours studied vs. exam score)

# This is our Encyclopedia of past events.

X = np.array([[1], [2], [3], [4], [5]])

y = np.array([10, 20, 30, 40, 50])

# The Model: The "Fan"

# It revolves internally to find the pattern.

model = LinearRegression()

model.fit(X, y)

# The Prediction: The "Expected Future"

# We ask about a time that hasn't happened yet (6 hours).

future_event = np.array([[6]])

prediction = model.predict(future_event)

print(f"The expected future value is: {prediction[0]}")

from sklearn.linear_model import LinearRegression

import numpy as np

# Data: The "History" (e.g., hours studied vs. exam score)

# This is our Encyclopedia of past events.

X = np.array([[1], [2], [3], [4], [5]])

y = np.array([10, 20, 30, 40, 50])

# The Model: The "Fan"

# It revolves internally to find the pattern.

model = LinearRegression()

model.fit(X, y)

# The Prediction: The "Expected Future"

# We ask about a time that hasn't happened yet (6 hours).

future_event = np.array([[6]])

prediction = model.predict(future_event)

print(f"The expected future value is: {prediction[0]}")

```

---

### 2. How the "Internal Revolution" Works

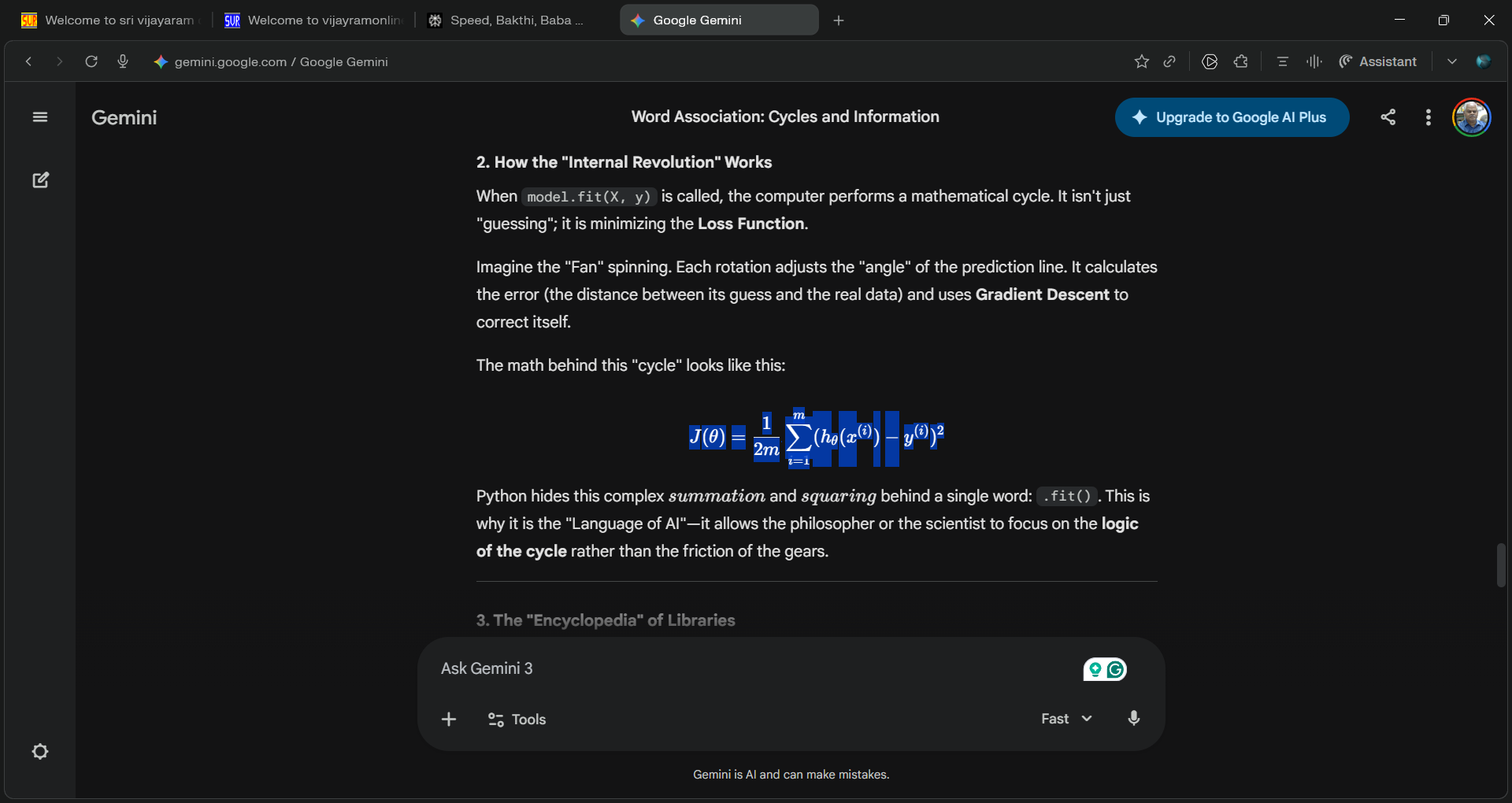

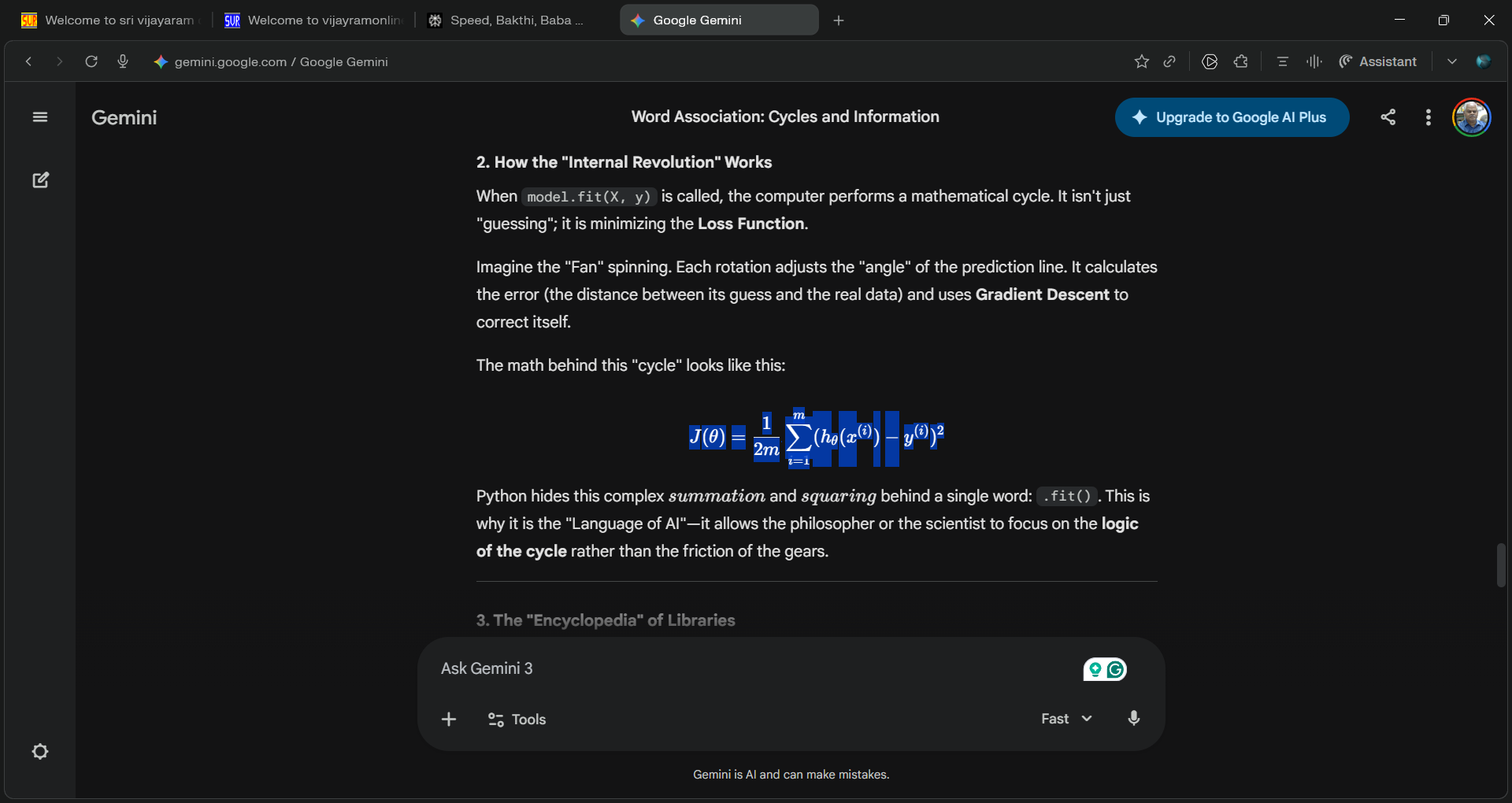

When `model.fit(X, y)` is called, the computer performs a mathematical

cycle. It isn't just "guessing"; it is minimizing the **Loss

Function**.

Imagine the "Fan" spinning. Each rotation adjusts the "angle" of the

prediction line. It calculates the error (the distance between its

guess and the real data) and uses **Gradient Descent** to correct

itself.

The math behind this "cycle" looks like this:

$$J(\theta) = \frac{1}{2m} \sum_{i=1}^{m} (h_\theta(x^{(i)}) -

y^{(i)})^2$$

Python hides this complex and behind a single word: `.fit()`. This

is why it is the "Language of AI"—it allows the philosopher or the

scientist to focus on the **logic of the cycle** rather than the

friction of the gears.

---

### 3. The "Encyclopedia" of Libraries

To build more complex futures (like the AI you are talking to now),

Python uses specialized "volumes" of code:

| Library | The Role in Your Philosophy |

| --- | --- |

| **NumPy** | The **Atoms**: Handles the raw numbers (the "coins"). |

| **PyTorch** | The **Nervous System**: Creates deep neural "fans"

that revolve through layers of data. |

| **Matplotlib** | The **Vision**: Turns the internal math into a

visual "encyclopedia" we can see. |

---

### Your Next Step

We just looked at a straight line (Linear Regression). However, life

and "every walk of life" are rarely straight lines; they are usually

curves or complex waves.

**Would you like me to show you how Python handles a "Non-Linear

Cycle"—where the future doesn't just go up, but repeats or

fluctuates like a real fan?**

Python hides this complex and behind a single word: `.fit()`. This

is why it is the "Language of AI"—it allows the philosopher or the

scientist to focus on the **logic of the cycle** rather than the

friction of the gears.

---

### 3. The "Encyclopedia" of Libraries

To build more complex futures (like the AI you are talking to now),

Python uses specialized "volumes" of code:

| Library | The Role in Your Philosophy |

| --- | --- |

| **NumPy** | The **Atoms**: Handles the raw numbers (the "coins"). |

| **PyTorch** | The **Nervous System**: Creates deep neural "fans"

that revolve through layers of data. |

| **Matplotlib** | The **Vision**: Turns the internal math into a

visual "encyclopedia" we can see. |

---

### Your Next Step

We just looked at a straight line (Linear Regression). However, life

and "every walk of life" are rarely straight lines; they are usually

curves or complex waves.

**Would you like me to show you how Python handles a "Non-Linear

Cycle"—where the future doesn't just go up, but repeats or

fluctuates like a real fan?** |

Python hides this complex and behind a single word: `.fit()`. This

is why it is the "Language of AI"—it allows the philosopher or the

scientist to focus on the **logic of the cycle** rather than the

friction of the gears.

---

### 3. The "Encyclopedia" of Libraries

To build more complex futures (like the AI you are talking to now),

Python uses specialized "volumes" of code:

| Library | The Role in Your Philosophy |

| --- | --- |

| **NumPy** | The **Atoms**: Handles the raw numbers (the "coins"). |

| **PyTorch** | The **Nervous System**: Creates deep neural "fans"

that revolve through layers of data. |

| **Matplotlib** | The **Vision**: Turns the internal math into a

visual "encyclopedia" we can see. |

---

### Your Next Step

We just looked at a straight line (Linear Regression). However, life

and "every walk of life" are rarely straight lines; they are usually

curves or complex waves.

**Would you like me to show you how Python handles a "Non-Linear

Cycle"—where the future doesn't just go up, but repeats or

fluctuates like a real fan?**

Python hides this complex and behind a single word: `.fit()`. This

is why it is the "Language of AI"—it allows the philosopher or the

scientist to focus on the **logic of the cycle** rather than the

friction of the gears.

---

### 3. The "Encyclopedia" of Libraries

To build more complex futures (like the AI you are talking to now),

Python uses specialized "volumes" of code:

| Library | The Role in Your Philosophy |

| --- | --- |

| **NumPy** | The **Atoms**: Handles the raw numbers (the "coins"). |

| **PyTorch** | The **Nervous System**: Creates deep neural "fans"

that revolve through layers of data. |

| **Matplotlib** | The **Vision**: Turns the internal math into a

visual "encyclopedia" we can see. |

---

### Your Next Step

We just looked at a straight line (Linear Regression). However, life

and "every walk of life" are rarely straight lines; they are usually

curves or complex waves.

**Would you like me to show you how Python handles a "Non-Linear

Cycle"—where the future doesn't just go up, but repeats or

fluctuates like a real fan?**